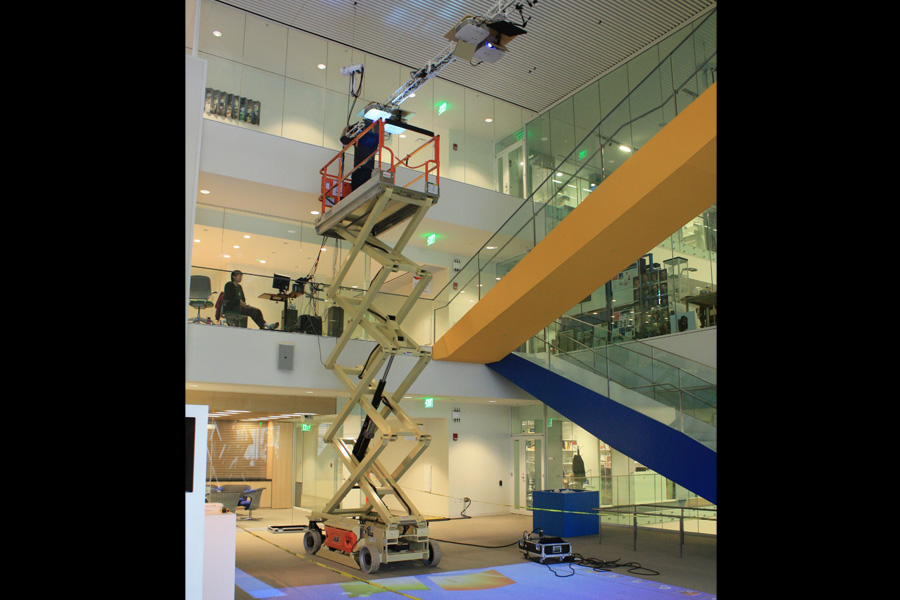

InterPlay is a 16'x24' platform in the Atrium of the Media Lab for designers to create dynamic social simulations, which transform public spaces into immersive environments where people become the central agents. Four projectors were donated by NEC and stiching software was provided by Scalable Displays in Cambridge to make the installation possible. Cameras and IR lights are from TouchMagic in India, and open source tracking software is from OpenTSPS. My goal with the project was to build a system to make full body interactions possible in a much larger space than you can with a single projector and experiment with the possibilities in the new Media Lab Building from 2010-2012.

It utilizes computer vision and projection to facilitate full body interaction with digital content. The physical world is augmented to create shared experiences that encourage active engagement in social contexts. Interplay was developed in collaboration with Scott Gilroy, Anette Von Kapri, and Pol Pla i Conesa.

A feedback application allowed participants to see themselves dissapear into the floor.

We used a cherry picker to assemble the setup and built custom mounts for the prjectors, cameras, and IR Lights.

Scott Gilroy wrote a lot of the applications for the system and built a control interface so that you can switch between applications from any mobile device.

We also installed HoloSonic (ultrasonic) speakers on either side of the projection for sound in the applications. The effect is discrete but powerful.

The Chronographer application on Interplay played back videos from the past with peoplein the present, and if viewer's stood near the red dots they could hear the audio.

I also incorporated some of the alogrithms from former explorations in algorithmic drawing on the platform.